- AWS Glue is the orchestrator: visual ETL for spatial data transforms, Spark-based ETL for heavy lifting with Sedona support, and Python Shell for lightweight scripts. Start here

- S3 + COG: read any region of a 50GB raster in 50-200ms via HTTP range requests. Storage cost: $0.023/GB/month

- Athena spatial SQL: query GeoParquet on S3 without a database. $5 per TB scanned, zero infrastructure

- Lambda + Step Functions for event-driven pipelines. Cold start with GDAL: 8-12 seconds. Warm: 200-400ms. Best for batch and event processing

AWS has 200+ services but no “AWS for Geospatial” product. That is both the challenge and the opportunity. You assemble your own stack from Lambda, S3, Athena, and Step Functions.

Done right, it is cheaper and more flexible than any packaged solution. Done wrong, it is a labyrinth of cold starts, timeout errors, and unexpected bills. This post covers the patterns that work in production and the pitfalls we have hit building geospatial pipelines on AWS for clients processing terabytes of raster and vector data.

Geospatial in Cloud Series

This is Part 2 of our Geospatial in Cloud series. Each post is self-contained. Part 1 covers Databricks. Part 3 covers GCP. Part 4 covers Snowflake. Read the one that matches your stack.

AWS Geospatial Services

Eight AWS services matter for geospatial. Most production workloads use only four. Here is the full map so you know what exists - and what to ignore.

| SERVICE | GEOSPATIAL USE | WHEN TO USE |

|---|---|---|

| Glue | ETL orchestration (visual ETL, Spark ETL, Python Shell) | Primary orchestrator for spatial data pipelines |

| S3 | Storage (COG, GeoParquet, STAC catalogues) | Always - primary storage layer |

| Lambda | Serverless processing (raster tiles, vector transforms) | Event-driven, small-to-medium payloads |

| Athena | Spatial SQL on S3 data (GeoParquet) | Ad-hoc queries, no infrastructure needed |

| Step Functions | Pipeline orchestration | Multi-step workflows, coordinating Glue + Lambda |

| ECS/Fargate | Container-based processing | Heavy processing, long-running jobs |

| EMR (Spark) | Large-scale distributed processing | 100M+ records, when Glue Spark is insufficient |

| SageMaker | ML on geospatial data | Satellite imagery classification |

KEY INSIGHT: KEEP IT SIMPLE

Most geospatial workloads on AWS use only 5 services: Glue + S3 + Lambda + Athena + Step Functions. Glue is the spine - it handles ETL, spatial transforms, and Sedona-based processing. The bottom three in this table are specialist tools - reach for them only when the core five genuinely cannot handle your workload. Starting with EMR or SageMaker before trying Glue Spark ETL is over-engineering.

Glue: The Orchestrator

AWS Glue is where most geospatial pipelines should start. It comes in three flavours, each suited to a different scale of spatial workload. Python Shell for small data (under 1GB) - think geocoding a CSV or buffering a few thousand parcels. Spark ETL for heavy lifting - spatial joins on millions of features using Apache Sedona. And Visual ETL for drag-and-drop data transforms when your team includes analysts who do not write code.

The critical distinction: Glue Python Shell is single-node (max 1 DPU, 16GB memory). For anything involving spatial joins at scale, distributed raster processing, or datasets exceeding a few gigabytes, you need Glue Spark ETL. This is where Sedona comes in - Glue 4.0 supports Sedona natively, giving you distributed spatial SQL across a managed Spark cluster without provisioning a single server.

| GLUE FLAVOUR | WORKER TYPE | MEMORY | GEOSPATIAL USE |

|---|---|---|---|

| Python Shell | G.1X (max 1 DPU) | 16 GB | Small vector ops with GeoPandas. Geocoding, buffering, format conversion |

| Spark ETL | G.2X | 32 GB | Distributed spatial joins, Sedona SQL, millions of features |

| Spark ETL | G.4X | 64 GB | Windowed raster processing, large COG operations |

| Spark ETL | G.8X | 128 GB | GPU-accelerated ML + raster combined workloads |

Sedona on Glue requires Spark ETL, not Python Shell. This is the single most common mistake we see in production. Teams set up a Python Shell job, try to import Sedona, and get cryptic import errors. Python Shell does not run Spark. For Sedona spatial SQL, you need a Spark ETL job with the Sedona JAR as a dependency.

Glue jobs install Python dependencies via the --additional-python-modules flag - comma-separated package names like geopandas, shapely, fiona, rasterio. For Sedona on Glue 4.0, the Spark JARs are pre-installed - you only need --additional-python-modules: apache-sedona for the Python API. On older Glue versions, use --extra-jars with S3 URIs pointing to JARs you have uploaded. A critical lesson from production: passing Maven coordinates instead of S3 URIs to --extra-jars causes a URISyntaxException that tells you nothing useful about the actual problem.

PRODUCTION WARNING: S3 IS NOT A FILESYSTEM

S3 is object storage. It does not support the seek operations that GDAL needs for writing GeoTIFFs or that SQLite needs for GeoPackage. The fix is the same two-stage write pattern used on Databricks Volumes: write to the local /tmp on the Glue worker, then upload the finished file to S3. Attempting to write directly to S3 paths fails silently or produces corrupt output. Every GIS library that does random I/O (rasterio, fiona, SQLite/GeoPackage) hits this.

Code structure Glue expects: a main.py entry point (the ScriptLocation) that imports flat step modules listed in --extra-py-files as S3 URIs. No package hierarchy - Glue cannot resolve nested imports. The main.py initialises GlueContext and Sedona, parses runtime arguments via getResolvedOptions, then calls each step in sequence. This is fundamentally different from how local Python projects are structured, and it is the gap that causes most deployment failures when migrating from desktop ArcPy to cloud Glue.

TWO TRAPS THAT WASTE HOURS

Path() does not work with S3. Python's Path("s3://bucket/file").exists() silently returns False on S3 paths - even when the file exists. Use boto3's head_object for existence checks. Also: Glue ignores requirements.txt entirely. All dependencies must go through --additional-python-modules.

IAM role propagation takes 10-15 seconds. Creating a new IAM role and immediately starting a Glue job with it causes a cross-account pass role error. Add a propagation wait after role creation, or your CI/CD pipeline will fail intermittently on first deployment.

Glue replaces three legacy tools simultaneously: FME for ETL transforms (Glue + Sedona covers the same spatial operations), scheduled ArcPy scripts (Glue jobs with EventBridge cron triggers), and file geodatabase management (GeoParquet on S3 with Glue Data Catalog for schema discovery). The Glue Data Catalog acts as a metadata layer that makes your S3 data queryable from Athena without any additional infrastructure.

Lambda for Processing

The cold start problem is the number one issue nobody talks about openly. GDAL + rasterio + numpy together form a ~150MB Lambda layer. The first invocation after a period of inactivity takes 8-12 seconds to initialise. That is unacceptable for real-time APIs but perfectly fine for batch processing and event-driven pipelines.

LAMBDA COLD START - REAL NUMBERS

Lambda with 1769MB memory, us-east-1

Warm invocations run in 200-400ms for typical raster tile generation. The trick is deciding whether you need provisioned concurrency ($0.015/GB-hour) or can accept cold starts for batch and event-driven processing.

The production pattern is straightforward: Lambda receives an S3 event with the bucket and key of a newly uploaded raster. It opens the COG via GDAL's virtual filesystem (which issues HTTP range requests to S3), reads only the spatial window needed using the bounding box from the event payload, and writes the result back to S3. The entire operation touches only the pixels it needs - not the full file.

Deployment tip: use Docker images, not zip layers. The 250MB unzipped size limit for Lambda layers is too small for GDAL + rasterio + numpy. Docker-based Lambda images support up to 10GB, and community base images like lambgeo/lambda-gdal include GDAL, rasterio, and numpy pre-compiled for Lambda's Amazon Linux runtime. This eliminates the most common deployment failure.

S3 + COG Storage

Why COG matters on S3: HTTP range requests mean Lambda reads only the pixels it needs. A 50GB satellite image can serve a 1km x 1km tile in 50-200ms without downloading the entire file. This is the pattern that makes serverless geospatial economically viable.

Storage cost: $0.023/GB/month on S3 Standard. A 1TB raster catalogue costs $23/month. For archival data accessed less than once a month, S3 Glacier Instant Retrieval drops this to $0.004/GB/month - an 83% reduction.

In practice, you open a COG on S3 directly using GDAL's virtual filesystem driver. When you request a windowed read - say, the 1km x 1km area around a specific bounding box - GDAL translates that into HTTP range requests against S3. It reads only the internal tiles that overlap your window, typically 50-200ms for a 50GB raster. The rest of the file is never touched. This is why COG format matters: without internal tiling and overviews, GDAL would need to download the entire file to extract a small region.

S3 layout convention matters for cost. Partition your data by date and region using Hive-style prefixes (raw/sentinel-2/year=2024/month=06/) so Athena and Glue crawlers skip irrelevant files. Store processed outputs separately from raw inputs. And always use GeoParquet for vector data - it gives you columnar storage with spatial metadata that both Athena and Glue can exploit for predicate pushdown.

The STAC catalogue pattern ties this together: index your COGs on S3 with a STAC API. Query by date, bounding box, cloud cover - then access individual COGs directly via range requests. No database, no file server, just S3 + metadata.

For detail on COG, GeoParquet, and STAC formats, see our cloud-native geospatial formats guide.

Athena Spatial SQL

Athena lets you query GeoParquet data directly on S3 with standard SQL. No database to manage, no infrastructure to provision. Point Athena at an S3 bucket, define an external table via Glue Data Catalog, and query.

CRITICAL: ATHENA HAS NO NATIVE ST_ FUNCTIONS

Unlike BigQuery or Snowflake, Athena does not have native spatial functions like ST_Contains or ST_Distance. You cannot write geometry-based spatial predicates in Athena SQL. The pattern that works: pre-compute H3 indices in your GeoParquet files (via Glue Spark ETL with Sedona) and use H3 cell lookups as your spatial filter in Athena. This is actually faster and cheaper than geometry-based queries - but it means your spatial indexing strategy must be designed at the data preparation stage, not at query time.

The workflow: Glue computes H3 indices and writes them as a column in your GeoParquet files. Athena queries filter by H3 cell values using simple equality or range predicates. No geometry comparison at query time, no spatial index to maintain. A query on "all parcels in Berlin" becomes a filter on H3 cells that intersect Berlin's boundary - computed once during data preparation, queried thousands of times in Athena.

Coordinate order warning: H3 functions expect (latitude, longitude) - the opposite of most GIS tools. If your H3 cells produce zero matches on data you know overlaps, check the axis order first. This is the same trap on Databricks Mosaic. On GCP (BigQuery), it is the reverse: ST_GEOGPOINT expects longitude first, latitude second. Every platform has its own convention.

Cost: $5 per TB of data scanned. With GeoParquet's columnar format, you typically scan 10-20% of the total data (only the columns you reference), so effective cost is $0.50-$1.00 per TB of actual data stored. For 100 queries a month on a 1TB dataset, that is roughly $5-10/month total.

PARTITION YOUR DATA

Athena charges per TB scanned. Partitioning your GeoParquet files by region or date means Athena skips irrelevant files entirely. A query on Berlin parcels should not scan data for Munich. Use Hive-style partitioning (s3://bucket/parcels/country=DE/state=BE/) and your costs drop by 80-90%.

The limitation: Athena is not a real-time database. Query execution takes 3-15 seconds depending on data volume and complexity. For sub-second spatial queries serving a web application, use PostGIS on RDS. Athena is for ad-hoc analysis and batch reporting.

Step Functions Pipelines

Geospatial workflows are rarely a single operation. Satellite imagery arrives, needs validation, conversion to COG, quality checks, and catalogue updates. Step Functions orchestrates this without you managing any servers - and it coordinates both Glue jobs and Lambda functions in the same pipeline.

The key pattern from production: use the .sync suffix on Glue resource ARNs so Step Functions waits for job completion before advancing. Pass S3 URIs between states (never inline data - there is a 256KB payload limit). Set explicit TimeoutSeconds for long-running raster operations. And use Parallel branches when steps are independent - processing vectors and rasters simultaneously rather than sequentially cuts pipeline time in half.

A real production pipeline - satellite imagery ingestion:

S3 Event: New Image Uploaded

S3 triggers the pipeline when a new GeoTIFF lands in the raw bucket. No polling, no cron jobs. Event-driven from the start.

Lambda 1: Validate and Extract Metadata

Check CRS, resolution, band count, and spatial extent. Reject malformed files before spending compute on processing. Extract metadata for the STAC catalogue.

Lambda 2: Generate COG Tiles

Convert raw GeoTIFF to Cloud Optimised GeoTIFF with internal tiling and overviews. This enables the range request pattern that makes serving tiles from S3 fast.

Lambda 3: Quality Checks

Verify the COG output: correct tile sizes, overview levels, spatial extent matches input. Catch processing errors before they propagate downstream.

Lambda 4: Update STAC Catalogue

Register the processed image in the STAC catalogue with metadata, thumbnail, and access links. The image is now discoverable and queryable.

PIPELINE COST PER IMAGE

Total: ~$0.03 per image (5 Lambda invocations + S3 reads/writes + Step Functions state transitions). At 1,000 images per day, that is $30/month for a fully automated ingestion pipeline with validation, conversion, quality assurance, and cataloguing.

Real Benchmarks

These numbers come from production workloads running in us-east-1. Lambda configured with 1769MB memory (1 full vCPU). S3 Standard storage class. We include the cold start numbers because pretending they do not exist helps nobody.

AWS GEOSPATIAL - PRODUCTION BENCHMARKS

Cost Analysis

The cost advantage of AWS for geospatial is real - but only if you have the engineering capability to build and maintain the stack. Here is the side-by-side comparison for a mid-sized team processing 10K tiles per day with 1TB of raster storage.

ESRI STACK

MONTHLY TOTAL

~$1,000/mo

+ Enterprise licence fee

AWS STACK

MONTHLY TOTAL

~$83/mo

No licence fees

CAVEAT: ENGINEERING COST IS REAL

These numbers assume you have the engineering capability to build and maintain the AWS stack. ESRI's cost includes the convenience of a managed platform with documentation, support, and a GUI. If your team does not have AWS experience, the engineering cost of building and maintaining this stack may exceed the savings for 1-2 years. The 12x cost difference only materialises when your team can operate the stack independently.

When NOT to Use AWS for Geospatial

We build geospatial pipelines on AWS for clients. We still tell some of them not to. These are the scenarios where AWS is the wrong choice:

1. Your team does not have AWS experience

The learning curve is steep. Lambda layers, IAM roles, VPC networking, S3 event triggers - each has pitfalls that take weeks to understand. Budget 2-3 months ramp-up for a team new to AWS before you can build anything production-grade.

2. You need a managed geospatial platform

AWS does not have one. You assemble from primitives. If you want click-to-deploy geospatial with a GUI, vendor support, and documentation, consider ESRI on AWS or Databricks. The DIY approach requires genuine engineering investment.

3. Real-time spatial queries (sub-50ms)

Athena is not a real-time database. Minimum query time is 3 seconds regardless of data volume. For sub-50ms spatial queries serving a live web application, use PostGIS on RDS or Aurora with a spatial index. Lambda + Athena is for batch, not interactive.

4. Large-scale raster analysis

Lambda has a 15-minute execution limit and 10GB memory ceiling. For heavy raster processing - mosaicking large areas, time-series analysis on years of satellite data, multi-band classification - use ECS/Fargate or SageMaker Processing. Or consider Google Earth Engine which was built precisely for this workload.

5. You are already on Azure or GCP

Multi-cloud adds complexity with minimal benefit. If your organisation's primary cloud is Azure or GCP, use their native geospatial services. The architectural patterns in this post translate to any cloud - the specific services just have different names. Do not split your infrastructure for geospatial alone.

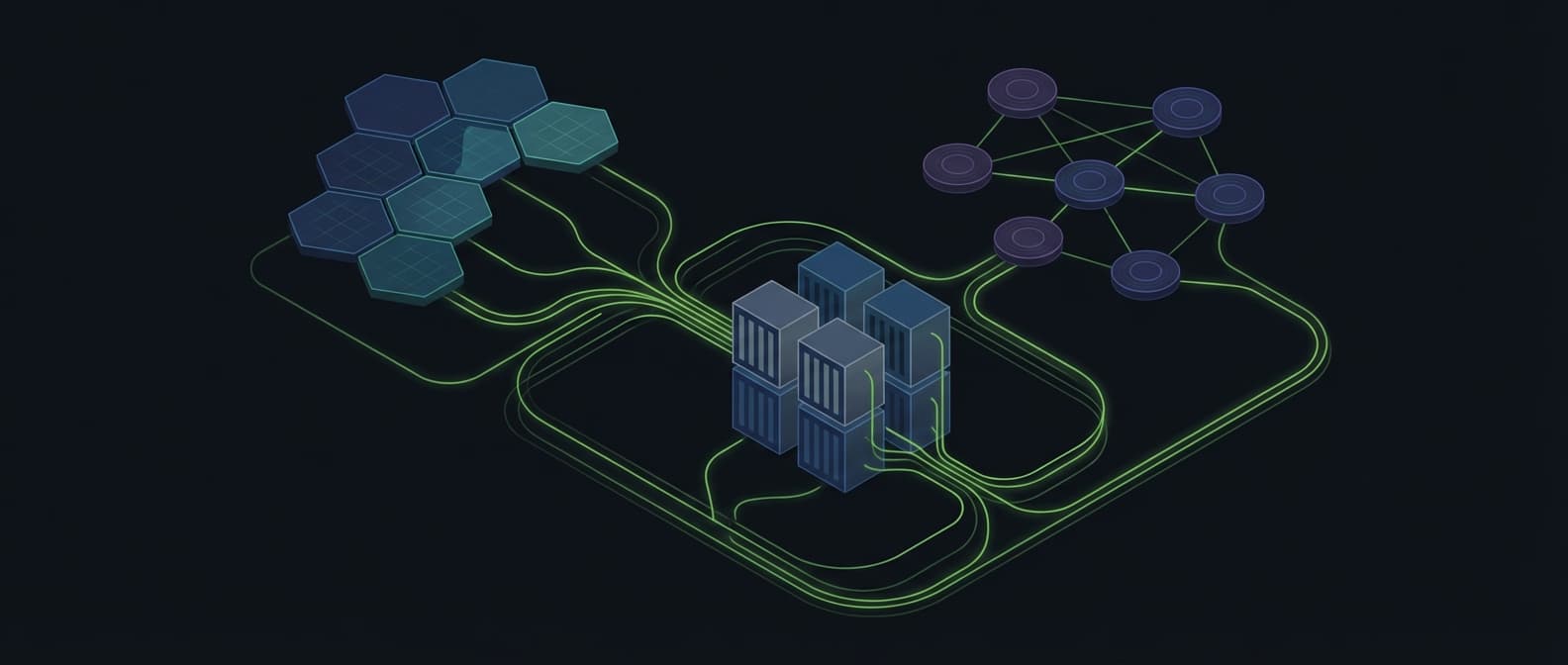

Reference Architecture

This is the production architecture we deploy for clients processing 1TB+ of geospatial data. Four layers, four AWS services per layer, no over-engineering.

1. DATA INGESTION

2. ETL + SPATIAL PROCESSING

3. ANALYSIS

4. PIPELINE ORCHESTRATION

5. SERVING

The STAC catalogue sits alongside this: a Lambda-backed API with DynamoDB storage that indexes all processed data. Users query the catalogue by bounding box, date range, or sensor type, then access individual COGs directly from S3 via range requests. Total infrastructure cost for the catalogue: under $10/month.

Frequently Asked Questions

Can AWS handle geospatial workloads?

Yes, but there is no single “AWS Geospatial” service. You combine S3 (storage), Lambda (processing), Athena (spatial SQL), and Step Functions (orchestration). This gives maximum flexibility but requires engineering effort to assemble. Most production geospatial workloads on AWS use only these four services.

What is the cold start time for Lambda with GDAL?

Cold start with a full GDAL/rasterio Lambda layer is 8-12 seconds. Warm invocations run in 200-400ms. For latency-sensitive workloads, use provisioned concurrency ($0.015/GB-hour) to keep functions warm, reducing cold start to under 0.5 seconds.

How much does geospatial processing cost on AWS?

Storage: $0.023/GB/month on S3. Processing: approximately $0.0001 per Lambda invocation for typical tile generation. Spatial queries: $5 per TB scanned via Athena. A typical monthly workload (1TB storage, 10K daily tile requests, weekly ad-hoc queries) costs approximately $50-80/month.

AWS gives you maximum control at minimum cost - if you have the engineering capability to assemble and maintain the stack.

$0.03 per image through a full pipeline. $23/month for a terabyte of rasters. Spatial SQL at $5 per TB scanned. The economics are compelling for teams that can operate the infrastructure. For everyone else, a managed platform saves more than it costs.

The pattern is the same regardless of cloud: store data in cloud-native formats, process with serverless compute, query with spatial SQL, orchestrate with managed workflows. AWS just happens to give you the most granular control over each piece.

Get Workflow Automation Insights

Monthly tips on automating GIS workflows, open-source tools, and lessons from enterprise deployments. No spam.